When Light Starts to Remember

Sujal SokandeAI Engineer & Researcher, Content Contributor

Fri Mar 06 2026

How Quantum Physics and Artificial Intelligence Are Converging

For decades, quantum physics and artificial intelligence developed as separate fields. Physicists studied the behavior of subatomic particles. AI researchers built systems that could learn from data. The two had little reason to speak to each other.

That is changing. A series of research developments published in early 2026 suggests that the boundary between these two fields is dissolving, not through hype or speculation, but through concrete experimental results. The most significant of these comes from an international collaboration involving Italy's National Research Council, the Italian Institute of Technology, and Sapienza University of Rome, published in Physical Review Letters on 18 February 2026.

Their finding, in plain terms: particles of light inside an optical circuit can spontaneously behave like a memory system. The same kind of memory system that forms the mathematical backbone of modern neural networks.

The research paper: Multiphoton Quantum Simulation of the Generalized Hopfield Memory Model — Published in Physical Review Letters, DOI: 10.1103/945c-11wt, February 2026

1. The Experiment: What Actually Happened

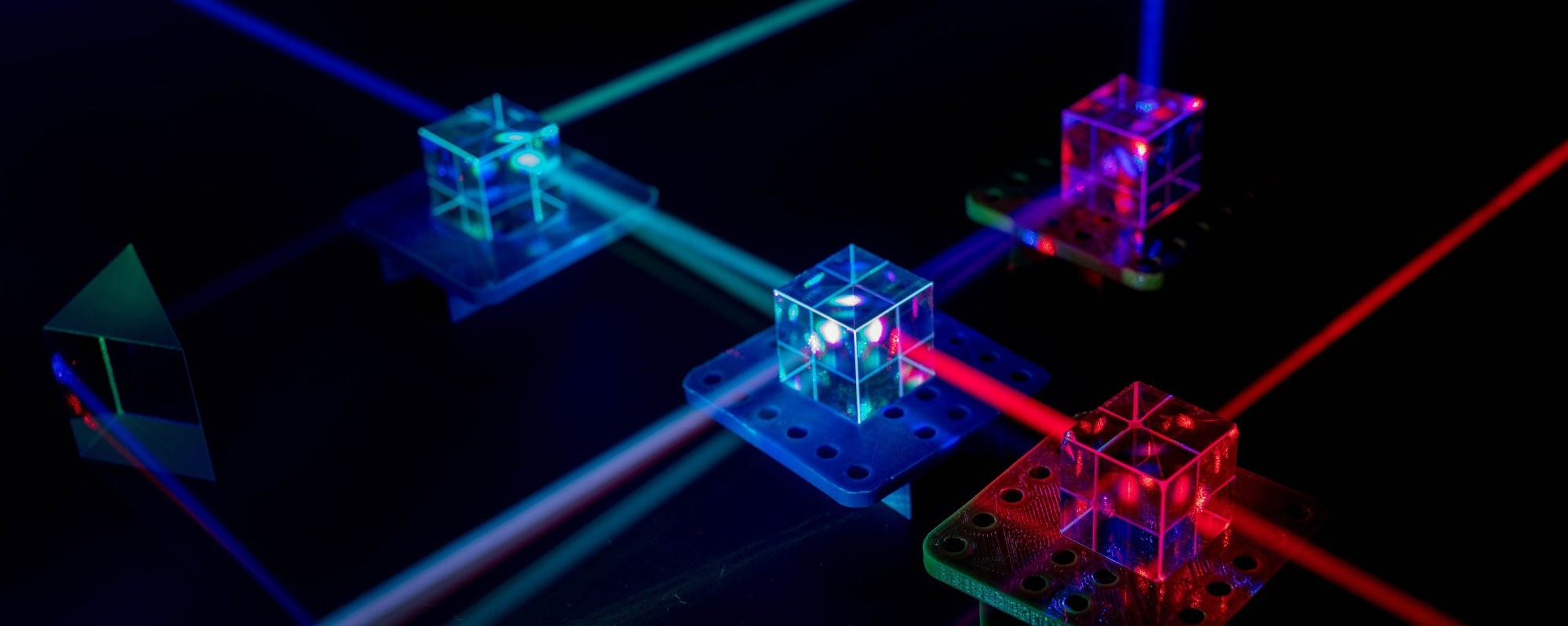

The core question the Italian researchers were exploring is whether quantum systems, specifically photons moving through optical circuits, could simulate the dynamics of a Hopfield Network. To understand why that matters, it helps to know what a Hopfield Network is.

What Is a Hopfield Network?

A Hopfield Network is a mathematical model developed in the 1980s to describe how memory works in biological systems. It is one of the foundational models in computational neuroscience. The basic idea is that a memory is not stored in a single location. Instead, it is distributed across a network of connected units. When you give the system a partial or corrupted version of a stored pattern, the network settles into the nearest complete pattern it knows. This is called associative memory, the same mechanism that lets a human brain recognize a half-remembered face or complete a familiar melody from just a few notes.

Hopfield Networks became important to AI because they provided a mathematical framework for understanding how neural networks can store and retrieve information. Modern large language models and deep learning architectures trace part of their theoretical lineage back to this work. John Hopfield, who first described the network, was awarded the 2024 Nobel Prize in Physics alongside Geoffrey Hinton for the foundational contributions this research made to machine learning.

What the Researchers Found

The Italian team, led by Marco Leonetti at Cnr-Nanotec, built an optical circuit using photonic chips and sent identical photons through it. What they observed was that the statistical behavior of where photons ended up at the output could be described using the same mathematics as a Hopfield Network.

In other words, the photons were not just carrying information from one point to another, as they do in fiber optic cables. They were behaving as the memory units themselves, spontaneously mimicking the dynamics of a neural network without being programmed to do so.

Instead of using traditional electronic chips, we exploited quantum interference, the phenomenon that occurs in photonic chips when particles of light overlap and interact with one another to encode and retrieve information. In this system, photons are not merely carriers of data, but themselves become the neurons of an associative memory. — Marco Leonetti, Senior Researcher, Cnr-Nanotec

Source: Leonetti M. et al., Physical Review Letters, February 2026. DOI: 10.1103/945c-11wt

2. The Physics Behind It

The mechanism that makes this possible is called bosonic quantum interference. When identical photons move through a linear optical network, they evolve together in a coherent way. The interference patterns that emerge between them encode information in a structure that turns out to be mathematically equivalent to a Hopfield energy function.

The researchers mapped the binary phase settings of the optical circuit, each of which can take one of two values, onto the spin variables used in classical physics models. From there, they showed that the output statistics of the photons follow the same mathematical rules as a generalized version of the Hopfield model, specifically what is called a p-body Hopfield model with multisynaptic connections.

The key number here is p, which in their setup equals two times the number of photons. With two photons, p equals four, giving a four-body Hopfield model. This matters because in higher-order Hopfield models, memory capacity scales as a power of the network size rather than linearly. In practical terms, more complex memories can be stored and retrieved.

The Memory Blackout Problem

The experiment also revealed a fundamental limit. When the amount of stored information is small relative to the system size, the photons retrieve patterns accurately. Quantum coherence keeps everything ordered. But as the information load increases past a critical threshold, the system undergoes a phase transition. The ordered retrieval state collapses into what physicists call a spin glass phase, a state of disorder where the system can no longer consistently retrieve any stored pattern. Memory effectively blacks out.

This is not a flaw in the experiment. It is a real physical limit, and it mirrors limits that exist in biological memory systems. Gennaro Zanfardino, the study's lead author from the University of Salento, described it this way:

When the amount of stored information is limited, the system is able to retrieve it correctly thanks to quantum coherence. However, as the volume of data increases, a transition emerges toward a memory black-out phase, in which the system enters a state of disorder, technically defined as a spin glass, losing its retrieval capability. — Gennaro Zanfardino, University of Salento

Source: Zanfardino G. et al., Physical Review Letters, February 2026

The study also connects this finding to work by Giorgio Parisi, who won the 2021 Nobel Prize in Physics for his research on complex systems and spin glasses. The researchers argue that the same laws of disorder Parisi studied in classical physical systems appear again in quantum photonic circuits. The connection is not coincidental. It suggests something deeper is at work in how disorder and memory interact, across both classical and quantum regimes.

3. Why This Matters for AI

The Energy Problem Is the Defining Challenge of AI Right Now

To understand why this research is practically significant, it helps to grasp the scale of the energy problem facing AI infrastructure today.

Training GPT-3, one of the earlier large language models, emitted approximately 500 metric tons of CO2 equivalent, comparable to driving a car for about one million miles. Annual inference operations for that same model are estimated to produce around 12,800 metric tons of CO2 equivalent, 25 times the training emissions, in a single year. The latest AI models are orders of magnitude larger. Meta's Llama-4 model, released in 2025, has two trillion parameters, more than 17,000 times the size of GPT-3.

Source: Communications Physics, Nature Publishing Group, October 2025

Goldman Sachs projects that data center power consumption will increase by 160 percent by 2030. The National Grid's chief executive has estimated a six-fold increase over the next decade. The scale of infrastructure required to run AI at current and projected levels is straining power grids and represents a genuine sustainability challenge.

Traditional silicon chips, which process information using electrons on CMOS circuits, are approaching physical limits in how much more efficient they can get. The semiconductor industry's ability to keep shrinking transistors, which has driven computing efficiency for fifty years, is running into fundamental constraints.

Photonics as a Path Forward

Photonic computing processes information using light rather than electrons. The advantages are well established in research and increasingly validated in practice. Light travels faster than electrons in metal wires, is not subject to the same resistive losses, and can carry multiple signals simultaneously through a single channel without interference between them, a property called wavelength division multiplexing.

The UK Photonics Leadership Group estimates that integrated photonics could reduce data center energy consumption by more than 50 percent by 2035. The German company Q.ANT, which commercially launched a photonic processor in 2025, claims up to 30 times the energy efficiency of conventional CMOS technologies for applicable workloads.

Source: UK Photonics Leadership Group, UK Photonics 2035: The Vision. Q.ANT NPS photonic processor launch documentation, 2025

NVIDIA has moved in this direction commercially. Its Quantum-X Photonics and Spectrum-X Photonics switches, which integrate silicon photonics directly onto the switch integrated circuit, reduce power consumption by up to 3.5 times compared to conventional pluggable optical transceivers. These systems were announced for commercial availability in 2026.

Source: NVIDIA Technical Blog, September 2025. TACC and CoreWeave partnership announcements, November 2025

What the Italian study adds to this picture is a more fundamental possibility. Not just using light to move data more efficiently between processing units, but using light to do the computation itself, specifically the kind of memory-based computation that is central to how neural networks work.

These results open new perspectives for the use of quantum optics and integrated photonics in the development of artificial intelligence systems. Devices of this kind could ensure high performance with drastically lower energy consumption compared to current data centers. — Luca Leuzzi, Research Director, Cnr-Nanotec

Source: Leuzzi L., Physical Review Letters press commentary, February 2026

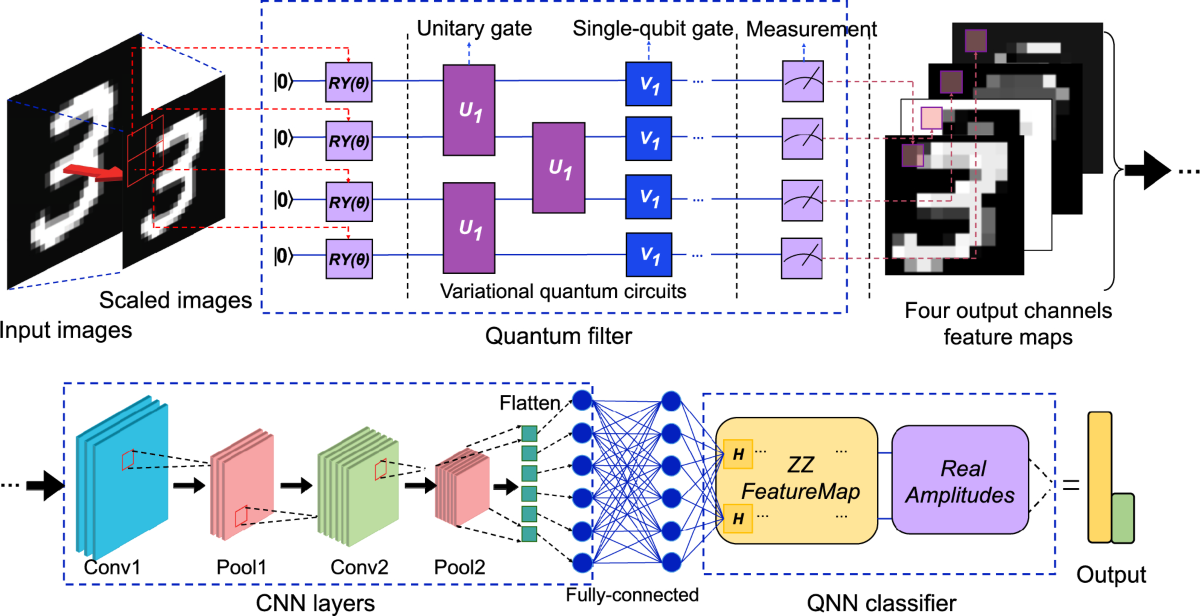

Hybrid Networks: Combining Classical and Quantum Layers

A parallel line of research published in Nature's npj Unconventional Computing journal in December 2025 explored hybrid quantum-classical photonic neural networks. The research team found that replacing layers of classical neurons in a photonic network with quantum circuit layers produced networks that matched the performance of classical networks nearly twice their size.

This suggests a practical near-term path that does not require replacing entire computing systems. Hybrid architectures could allow AI systems to incorporate quantum photonic components in specific layers where they provide the greatest advantage, while retaining classical computing for the rest of the pipeline.

Source: Hybrid quantum-classical photonic neural networks, npj Unconventional Computing, Nature, December 2025

4. What Stage Are We Actually At?

It is important to be clear about where this research sits in terms of practical deployment.

The Italian study is a fundamental physics result. It demonstrates a theoretical and experimental connection between quantum photonic systems and neural network models. It is published in one of the most rigorous physics journals in the world. It is not a product announcement.

Photonic computing more broadly is at what the industry would call the pre-commercial stage. In late 2025, scientists announced the development of photonics-based computer memory devices. AI accelerators capable of executing neural network operations with light have been demonstrated in academic settings. Q.ANT has a commercial product. NVIDIA has commercial photonic networking switches coming in 2026. But no fully functional general-purpose photonic computer exists yet for real-world deployment at scale.

Source: Data Center Knowledge, February 2026. Q.ANT commercial documentation

The research establishes that photons in optical circuits can naturally perform the same operations as neural network memory systems. The engineering challenge now is scaling this into practical hardware.

The researchers themselves frame the near-term use of their system as a simulator and testbed, a platform that can explore the behavior of complex disordered systems that are difficult or impossible to simulate on conventional computers. This is useful in its own right. Many problems in material science, drug discovery, financial modeling, and logistics optimization involve exactly this kind of complex disordered landscape.

The longer-term implication is that photonic platforms could become native hardware for certain types of AI computation, not just a faster way to move data, but a fundamentally different way to process it.

5. The Broader Research Context

This result does not appear in isolation. Several parallel research threads are converging in this area.

Quantum Hopfield Networks: Prior Theoretical Work

Researchers at MIT and the University of Toronto published a theoretical framework for quantum Hopfield networks in 2018, showing that an exponentially large network could be stored in a polynomial number of quantum bits by encoding the network into the amplitudes of quantum states. This produces a quantum computational complexity that is logarithmic in the dimension of the data, a significant theoretical advantage over classical approaches.

Source: Rebentrost P., Bromley T.R., Weedbrook C., Lloyd S. Quantum Hopfield Neural Network. Physical Review A 98, 042308, 2018

Open Quantum Hopfield Networks

Researchers at the University of Nottingham and other institutions have extended the model to open quantum systems, where the network can exchange energy and information with its environment. A 2025 study published in Physical Review Research introduced a discrete modern Hopfield network model in this open quantum framework, showing qualitatively new behaviors compared to closed quantum systems, including additional stable states and limit cycle phases not seen in classical networks.

Source: Physical Review Research 7, 033159, August 2025

Hybrid Quantum-Classical Photonics at Vienna

Research from the University of Vienna published in June 2025 demonstrated that photonic quantum chips could make AI computations both faster and more energy efficient in practical hybrid configurations. The work, covered by ScienceDaily, showed that integrating quantum photonic components into AI processing chains produced measurable improvements in performance metrics.

Source: University of Vienna, ScienceDaily, June 2025

6. Key Takeaways

For anyone following developments at the intersection of physics, computing, and AI, here is what this moment adds up to:

- The connection between quantum physics and AI is no longer purely theoretical. The Italian study published in Physical Review Letters provides experimental evidence that quantum optical systems naturally exhibit neural network dynamics.

- The energy problem facing AI is real and urgent. Current trajectories of AI infrastructure growth are not sustainable with conventional silicon computing. Photonic computing represents a credible path toward substantially lower energy consumption per computation.

- Hybrid approaches are the near-term reality. Full replacement of electronic computing with photonic systems is not imminent. But hybrid architectures that incorporate photonic or quantum photonic components in specific parts of AI pipelines are being developed commercially right now.

- The physics of disorder connects quantum and classical systems in unexpected ways. The spin glass transition observed in this experiment mirrors limits in biological memory and classical neural network models. Understanding where these limits come from and how to work around them is an open research question with direct implications for how AI systems are designed.

- Practical photonic AI hardware is on a near-term commercial roadmap. NVIDIA's photonic switches, Q.ANT's commercial processor, and research from multiple university groups all point to 2025 to 2028 as the window when photonic components begin entering AI infrastructure at scale.

Sources and References

The following sources were used in the preparation of this analysis:

- Zanfardino G. et al. Multiphoton Quantum Simulation of the Generalized Hopfield Memory Model. Physical Review Letters. DOI: 10.1103/945c-11wt. February 2026.

- Hybrid quantum-classical photonic neural networks. npj Unconventional Computing, Nature Publishing Group. December 2025.

- Analysis of Discrete Modern Hopfield Networks in Open Quantum System. Physical Review Research 7, 033159. August 2025.

- Photonics for Sustainable AI. Communications Physics, Nature Publishing Group. October 2025.

- Rebentrost P., Bromley T.R., Weedbrook C., Lloyd S. Quantum Hopfield Neural Network. Physical Review A 98, 042308. 2018.

- NVIDIA Technical Blog. Scaling AI Factories with Co-Packaged Optics for Better Power Efficiency. September 2025.

- UK Photonics Leadership Group. UK Photonics 2035: The Vision.

- Q.ANT. Native Processing Server NPS documentation. 2025.

- Data Center Knowledge. Forget Quantum? Why Photonic Data Centers Could Arrive First. February 2026.

- University of Vienna. Photonic quantum chips are making AI smarter and greener. ScienceDaily. June 2025.

- The Brighter Side of News. The unprecedented link between quantum physics and artificial intelligence. February 2026.

- Phys.org. When light thinks like the brain: The connection between photons and artificial memory. February 2026.

previous

Does the Concept of the Soul Still Make Sense?

next

Death, Identity, and Meaning in a Post-Biological Future

Share this

Sujal Sokande

AI Engineer & Researcher, Content Contributor

Sujal Sokande is an AI Engineer and researcher specializing in production-scale AI systems and large language models. Currently pursuing a Diploma in Computer Science (AI & Data Science) at MIT World Peace University, he has architected and deployed enterprise conversational AI solutions processing over 500,000 documents across multiple production websites. His work focuses on multi-agent RAG systems, semantic search optimization, and distributed data processing on Google Cloud Platform. A published researcher and branch topper, Sujal combines deep technical expertise in AI/ML frameworks with practical experience in building scalable AI infrastructure that solves real-world challenges.

More Articles

"Do Architecture": Wang Shu and Lu Wenyu Reveal Vision for 2027 Venice Architecture Biennale

Netflix's INKubator: How AI Is Rewriting the Rules of the Creator Economy

Henry Moore and More: Where Sculpture Meets the Wild

2026 Nobel Peace Prize: 287 Candidates Nominated as the World Watches

Beeple: INFINITE_LOOP Opens at NODE Foundation in Palo Alto: What You Need to Know